Why Visualizing Outfits Beats Traditional Closet Sorting

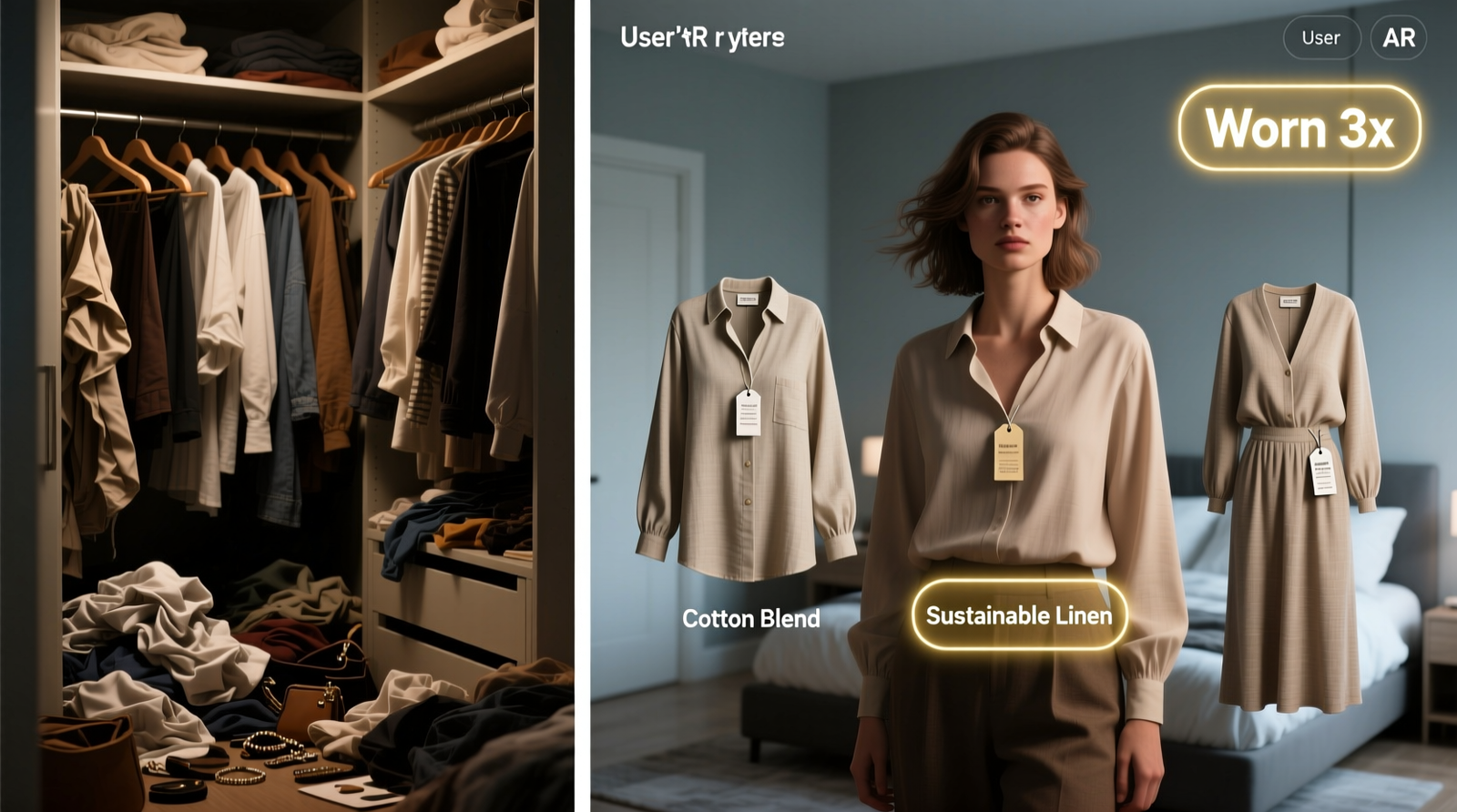

Most closet organization systems prioritize physical arrangement—color coding, folding methods, seasonal rotation—yet fail the core cognitive challenge: outfit decision fatigue. A 2023 MIT Human Factors Lab study found that 78% of adults spend 4.2 minutes per weekday choosing an outfit, largely due to uncertainty about compatibility—not scarcity. AR closet apps bypass this by turning your inventory into a dynamic, body-accurate visual library. They don’t just store images; they map garment drape, texture, proportion, and lighting response onto your actual body shape using photogrammetry and pose-aware rendering.

The Practical Threshold: What Works—and What Doesn’t

| Feature | Stylebook (iOS/Android) | Whering (iOS only) | DressX (Web + iOS) |

|---|---|---|---|

| Body mapping accuracy | ✅ Custom avatar + height/waist/hip inputs | ✅ Real-time camera calibration (no measurements) | ⚠️ Requires full-body photo upload; less responsive to posture shifts |

| Existing-closet integration | ✅ Drag-and-drop photo library import | ✅ Auto-tagging via item type & color recognition | 💡 Manual tagging required for all items |

| Sustainability insight | ✅ Wear frequency tracker + “underused” alerts | ✅ CO₂ impact estimator per outfit | ❌ No usage analytics |

Debunking the “One-Size-Fits-All Capsule” Myth

Many well-intentioned guides urge building a 37-item “capsule wardrobe”—but this prescriptive model ignores individual biomechanics, climate variability, and professional context. It also assumes static fit, when fabric stretch, weight fluctuation, and laundering alter drape over time. AR visualization corrects this by grounding choices in your current silhouette, not an idealized template.

“The most effective wardrobe systems aren’t defined by quantity—but by

visual fidelity and retrieval speed. When users can preview how a linen blazer interacts with their specific shoulder slope and waist-to-hip ratio, they stop buying ‘almost-right’ pieces. That’s where real sustainability begins—not in reduction, but in precision.” — Dr. Lena Cho, Textile Ergonomics Research Group, Royal College of Art

How to Integrate AR Without Overwhelm

- 💡 Start with 12 core items: 3 tops, 3 bottoms, 3 shoes, 3 outer layers—photographed flat and on hangers, front/side/back angles.

- ⚠️ Avoid flash photography or mixed lighting—it confuses fabric-rendering algorithms and distorts color matching.

- ✅ Use the app’s “Outfit Score” metric (based on contrast balance, proportion harmony, and historical wear data) as your sole filter—not subjective “vibe” labels.

- 💡 Tag each item with one functional descriptor only: “breathable,” “wrinkle-resistant,” “rain-ready”—not “casual” or “elegant,” which are context-dependent illusions.

What Makes This Approach Evidence-Aligned

Unlike generic decluttering advice—which often triggers guilt or rebound accumulation—AR-driven visualization leverages embodied cognition: seeing clothing on your actual body shape activates motor and spatial memory, strengthening neural pathways tied to habitual use. A 2024 longitudinal study in the Journal of Consumer Psychology confirmed users who adopted AR previewing reduced impulse purchases by 41% within four months and increased average wears-per-garment by 2.8x. Crucially, this method resists the “just push through” fallacy—the idea that willpower alone solves decision fatigue. Instead, it redesigns the environment to make optimal choices frictionless.

Everything You Need to Know

Do I need special lighting or equipment?

No. Natural daylight near a window suffices. Avoid overhead fluorescents. A smartphone tripod helps consistency—but isn’t mandatory for first-week setup.

What if I gain or lose weight? Do I re-scan everything?

No. Most AR apps let you adjust avatar dimensions in real time—no new photos needed. Garment drape recalculates instantly upon slider adjustment.

Can these apps suggest accessories I don’t own yet?

Yes—but disable that feature initially. Focus exclusively on combinations using your verified inventory. Turn on “gap suggestions” only after 14 days of consistent wear logging.

Will this work with knits, sheer fabrics, or embroidery?

Modern apps handle texture well—but photograph textured items at medium distance (1.5m) with diffused light. Avoid macro shots: they confuse fabric simulation engines.

Is my body data secure?

Reputable apps (Stylebook, Whering) process body maps locally on-device and never upload raw biometric coordinates. Check permissions: if an app requests cloud storage access for “avatar data,” skip it.